That’ll do fine!: A coarse lexical resource for English-Hindi MT, using polylingual topic models

Abstract

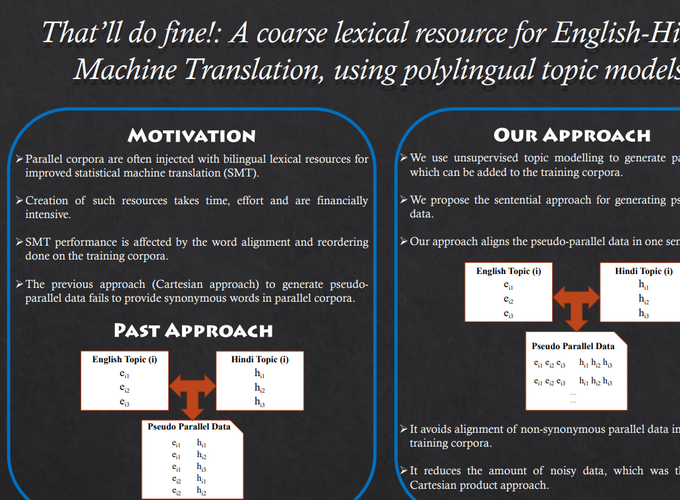

Parallel corpora are often injected with bilingual lexical resources for improved Indian language machine translation (MT). In absence of such lexical resources, multilingual topic models have been used to create coarse lexical resources in the past, using a Cartesian product approach. Our results show that for morphologically rich languages like Hindi, the Cartesian product approach is detrimental for MT. We then present a novel ‘sentential’ approach to use this coarse lexical resource from a multilingual topic model. Our coarse lexical resource when injected with a parallel corpus outperforms a system trained using parallel corpus and a good quality lexical resource. As demonstrated by the quality of our coarse lexical resource and its benefit to MT, we believe that our sentential approach to create such a resource will help MT for resource-constrained languages.